latest

Getting Real: George

This month’s story, “George”, by Stephen Palmer provides a view of Artificial Intelligence (AI) in healthcare which is somewhat different to those we’ve explored in the Fictions series to date. In the story, we learn about a company called Goode Ltd. which is developing an AI representation of the English National Virtual Patient. This is an artificial representation of the average patient in the UK to be used for healthcare purposes. The AI is being developed for symptom comparisons and diagnosis of conditions and has not yet been released to the market. Early in the story, we learn about some concerns about the development of the software which sits behind the virtual patient named George. Through Mel’s experience, we see how developing technology without considering where current practices are flawed could result in equally flawed solutions.

The story starts with Mel’s consultation with Dr. Lee, which hopefully is not too familiar, although I am sure there will be readers who have experienced similar. Health and care rely on human interaction, understanding and empathy. When you have a consultation, you expect to be treated fairly with understanding and kindness, and rightly Mel does not find her treatment acceptable.

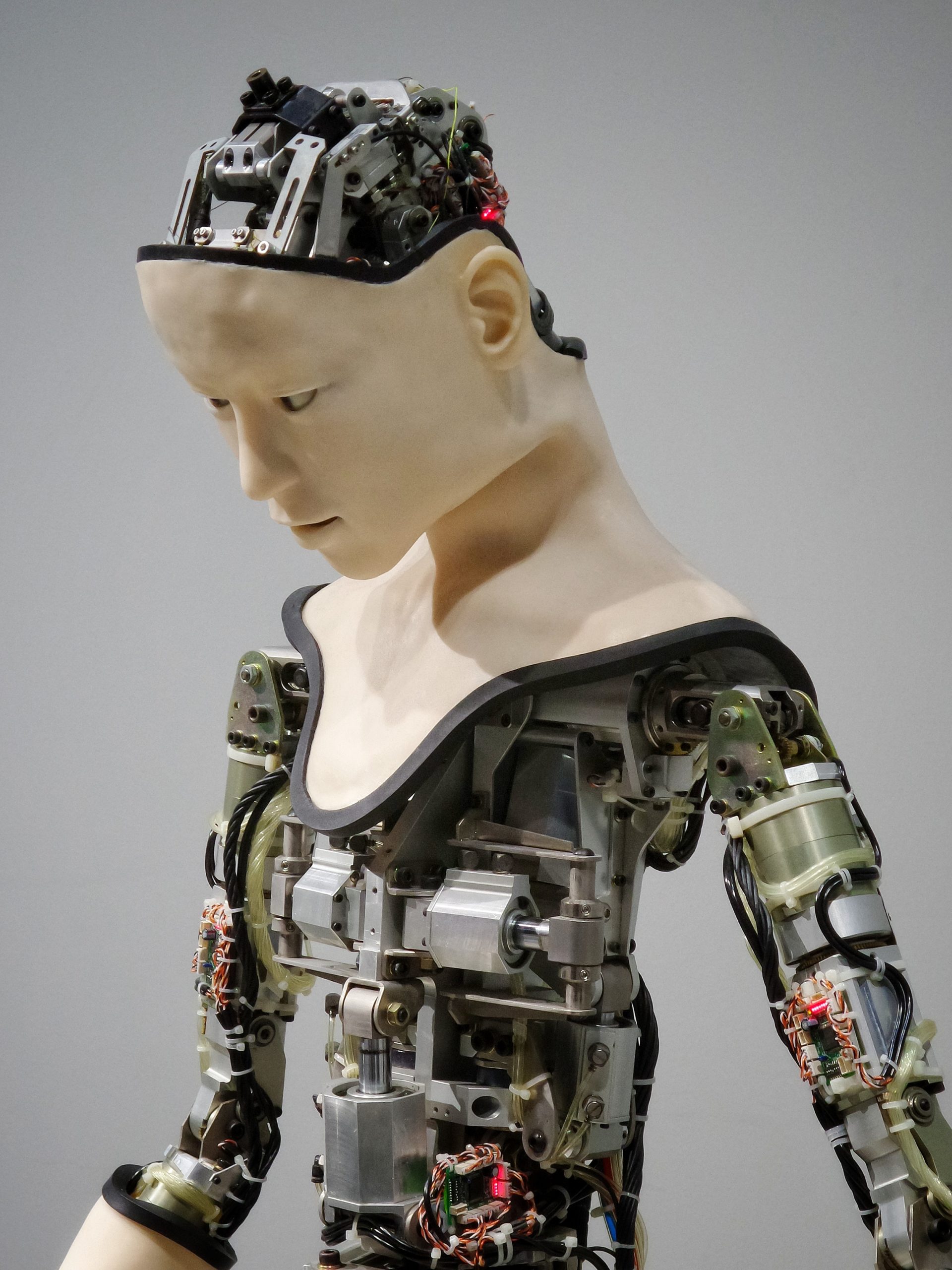

The experience of interacting with a computer or AI tool is a recurring theme throughout the story and it is interesting to read how a human-computer interface (HCI) develops and how the protagonist communicates with different types of interface. Many robots in real life and fictional settings are designed with human emotion in mind. The stories Ex Machina and I, Robot are two of my favourite representations of human features in robots and the subsequent interactions between robots and humans. Both serve as cautionary tales exposing some of the dangers of advanced technology and systems which mimic human behaviour and emotion. To me, it is surprising how uniform an approach we seem to take with the development of interfaces. We interact with a vast range of people in a work and social capacity, so it seems unusual to have such little variety in how we interact with computing systems. As a basic example, when using chatbots, some individuals very much enjoy interacting with an interface which mimics human behaviour; this can be through whimsical language choices or taking time for the chatbot to “type” a response. However, others prefer an efficient interface, which finds a solution rapidly as a priority over any other features. It is also likely that this varies depending on aspects such as time of day and mood. Where vast amounts of recent technical developments have related to personalization, it is almost surprising that such a uniform approach is often taken to interfaces. Similarly, in a clinical consultation a straightforward facts-based approach will suit some people well and a softer approach will suit others better.

Empathy is an interesting aspect of biology and is something still in development for artificial systems. In the “Getting Real” series, we have focused on what makes us human and some of the machine-based approaches for replicating this. We have not considered how many of the things we state as human may not necessarily be as human as we like to believe. One example of this could be empathy. Researchers have demonstrated empathy-like behaviours in a range of species. In many of these studies, animals help others in distress without reward, from non-human primates to rodents and birds. It is difficult to determine exactly how these behaviours compare to human empathy, particularly in terms of the underlying complex emotional processes we know humans feel. Protective self-isolating behaviours have also been observed in ant colonies, lobsters, and vampire bats when infections are present. This may seem niche, but it has helped with the interpretation of behaviours for infection prevention during COVID-19. A recent paper in the journal Minds and Machines considered the role of empathy in AI systems for health and care and concluded that, “it is reasonable to assume that the best artificial carers may outperform the worst human practitioners”. If we think about the story here, a machine empathy approach would be a marked improvement over Dr. Lee.

Diagnostic applications are some of the most common AI tools being developed in healthcare. As things currently stand, a diagnosis can only be made by a trained human clinician or clinical specialist. A statistical model, AI approach or several technical tools can be used to detect a condition or provide insights to aid diagnosis. These could have accuracies and probabilities of approaching 100% certainty, however, a human would need to provide the formal diagnosis. It is a subtle difference; diagnosis is the onward identification of a disease after the detection of biological markers or symptoms.

Mel is employed by Goode Ltd. in the Data Gathering Department, collecting real-world data for the AI model to be trained on. This sort of job role seems a little counterintuitive in a data-centric future. Human collection of data is not considered the gold standard. Reducing human error and automated data capture systems are generally much easier to work with and more consistent. This is a large part of the need to digitize clinical and care records. In computer science and data analytics “garbage in, garbage out” is a mantra-like comment on model quality in relation to data quality. The information or insight gained from the analysis of a dataset is limited by the quality of the original data. This concept is at the core of the story and Mel’s concerns about George. We do not explicitly know what datasets the model underpinning George has been trained on. In the story, Roy makes it clear that age and ethnicity are well-covered. However, it seems there may be quite a major blind spot in terms of sex and gender. In Invisible Women, Caroline Criado-Perez has discussed the dangers of a world where products, safety features and medicines are designed for men rather than women, and it highlights where products like George are not fit for purpose.

Having non-representative data is a significant flaw for an AI healthcare tool. Healthcare, and life sciences research also disproportionately represents male data. Many clinical trials have more white, single, male subjects than any other group, and this is an issue that has been known about for a long time. The problem goes beyond who has taken part in a clinical trial and there are similar issues with the sex of animals in laboratory experiments. This is a real concern for development and selection of appropriate treatments, understanding medical conditions and the development of AI models. Where George is being developed as a sort of digital twin for healthcare symptoms and diagnostics, it is deeply concerning that such issues exist so close to market. Many researchers, policymakers and technology developers are working together to develop standards for AI development in healthcare. This is to ensure patient safety is protected as AI is adopted in an increasing number of medical products.

If, in time, the right models can be developed, high-quality data can be collected and empathic interfaces introduced. We will then need to consider the role of humans “in the loop”. The I Am Echoborg installation is an interesting thought experiment and group experience which imagines the future of HCIs and considers how a human actor or “Echoborg” could be employed to be the human face of an AI chat bot. Looking ahead, there is much more work needed to include a vast range of demographics in datasets for appropriate treatment. This would ensure that people can comfortably interface with technology solutions and be confident they are accurately and fairly represented in the data underpinning them.

There were many other curious themes in George, notably about open source “leaking” of code and intellectual property, which would be interesting to explore in more depth another time.